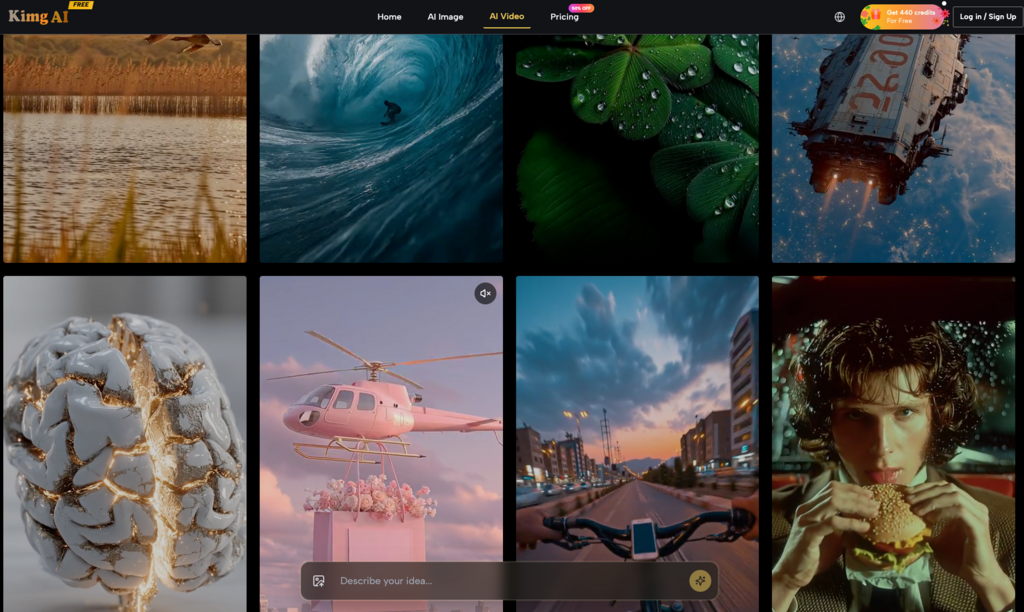

The “hard truth” of performance marketing in the generative era is that most AI-generated video is commercially useless. You see the polished demos on social media—cinematic pans, fluid motion, flawless lighting—but when your team attempts to replicate those results for a product launch or a high-spend social campaign, the output is often a smeary, incoherent mess. Characters morph into furniture, logos melt into the background, and the “uncanny valley” effect alienates the very audience you are trying to convert.

The failure isn’t usually in the motion model itself. It is in the starting point. Most marketers approach AI video with a “Text-to-Video” mindset, hoping a paragraph of descriptive prose will magically result in a high-performing 15-second ad. In reality, professional-grade AI video is built on an “Image-to-Video” (I2V) workflow. If your first frame is technically weak or compositionally cluttered, no amount of compute power can save the downstream motion. To scale ad creative, you must treat the first frame not as a static visual, but as a technical blueprint for every motion vector that follows.

The First Frame Fallacy in Performance Marketing

In a high-volume creative environment, unpredictability is the enemy of ROI. Text-to-video models are inherently stochastic; they take massive creative leaps that often ignore brand guidelines. A prompt like “a person drinking a soda on a beach” might give you ten different art styles, three different bottle shapes, and a dozen lighting scenarios. For a performance marketer, this randomness translates to wasted render credits and hours of manual curation.

The industry is rapidly shifting toward I2V as the operational standard. By using a tool like Banana AI to establish the “Anchor Frame” first, you lock in the brand’s visual DNA—the specific color palette, product geometry, and lighting—before a single frame of motion is rendered. This approach shifts the creative burden from the unpredictable motion engine to the much more controllable image engine. If you cannot get the product to look right in a static shot, it will never look right at 24 frames per second.

Precision Composition as the Foundation for Predictable Motion

To understand why AI video breaks, you have to look at how these models interpret pixels. When a motion model “animates” an image, it essentially calculates how groups of pixels should shift relative to one another over time. If your source image lacks clear edge detection or defined depth, the model struggles to differentiate the subject from the background. This leads to “ghosting” artifacts, where a product seems to bleed into the environment as it moves.

Establishing Depth and Separation

A successful source asset requires high contrast between the subject and the background. If you are generating a hero shot of a luxury watch, the lighting needs to define the edges of the casing clearly. This isn’t just about aesthetics; it’s about providing the AI with a “map” of where one object ends and another begins.

The Cost of Messy Source Frames

From a commercial perspective, using a low-quality or cluttered source frame is expensive. Every failed render is a loss of time and budget. By adopting a “Source-First” mindset, you reduce the AI’s need to “hallucinate” missing details. When the motion model has a high-fidelity image to work with, its job is simplified to physics and movement, rather than trying to figure out what the object actually is.

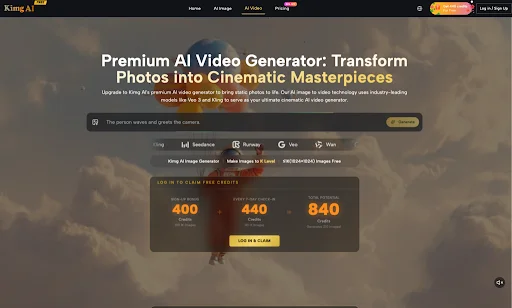

Generating High-Fidelity Source Assets via Banana AI

The first step in a professional pipeline is dialing in the aesthetic. Using the core Banana AI model allows creators to iterate on the prompt-to-image loop until the visual matches the campaign’s creative direction. This is where you solve for texture, lighting, and brand consistency.

For example, if you are running a campaign for a skincare brand, the skin texture in the first frame must be realistic—neither too plastic nor too porous. By focusing your prompting efforts here, you ensure that the “Base Reality” of the ad is solid. Once you have an image that satisfies the brand’s requirements, you are no longer asking the video generator to “create” a skincare ad; you are simply asking it to “animate” a pre-approved visual. This significantly increases the probability of a usable output on the first render.

Why Nano Banana Pro AI Solves for Downstream Motion Artifacts

As you move from a rough concept to a production-ready asset, resolution becomes a functional requirement, not a luxury. This is where Nano Banana Pro AI enters the workflow. One of the most common issues in AI video is “pixel crawl” or blurring during camera pans. This happens when the source image lacks the necessary density of data for the model to track fine details.

The “K-Level” Advantage

By upscaling or generating at K-level resolution through Nano Banana Pro AI, you provide the motion engine with a much deeper pool of data points. When the virtual camera “moves” across a high-resolution surface, the model can maintain the integrity of textures—like the weave of a fabric or the condensation on a cold can—without them dissolving into digital noise.

Format Flexibility and Aspect Ratios

Performance marketing demands assets for various placements, from 9:16 Reels to 16:9 YouTube pre-rolls. Generating a high-resolution source frame allows for better outpainting and cropping. If you start with a centered, high-fidelity image, you can adapt that single asset into multiple formats without losing the sharpness required for high-converting ads. Nano Banana Pro provides the technical overhead needed to ensure that even when zoomed or cropped, the video remains crisp.

The Economic Reality of Renders: Success Rates and Scalability

There is a measurable business metric that many creative teams overlook: the Render Success Rate (RSR). If your team has to run 10 video prompts to get one usable 5-second clip, your cost-per-asset is 10x higher than it should be.

Investing in the Image Phase to Save in the Video Phase

The time spent perfecting an image in Nano Banana Pro is almost always less than the time spent rerunning failed video renders. A well-composed, high-resolution image might take 5 to 10 minutes of prompting and upscaling, but it can yield a usable video on the first or second try. In a high-volume agency setting, this efficiency gain is the difference between a profitable AI implementation and a money-sink.

Building a Reusable Asset Library

A library of random 4-second videos is a liability; it is difficult to search, edit, or repurpose. However, a library of high-quality, well-composed images is a scalable asset. You can take a single high-fidelity image and apply different motion paths—zoom, pan, tilt—using various I2V tools to create a dozen different ad variations. This modular approach is only possible if the first frame is of sufficient quality.

Boundaries of Current Generation and When to Stay Static

Despite the rapid advancement of tools like Nano Banana Pro AI, it is critical to maintain a level of skepticism regarding what AI can currently handle. Not every concept is a good candidate for motion, and forcing it can lead to a decrease in ROAS (Return on Ad Spend).

The Struggle with Text and Physics

Currently, AI motion models still struggle with precise text legibility within a moving frame. If your ad requires a specific promotional code or a clear call-to-action to appear on a moving product, the motion will often distort the letters. In these cases, it is almost always better to use a high-quality static frame from Banana AI and layer traditional motion graphics (static overlays) on top in post-production.

Uncertainty in Complex Interactions

Similarly, interactions between humans and objects—like a hand opening a complicated latch—often result in physical impossibilities (fingers merging with the object). If the motion requirements are too complex, the “hallucination” rate skyrockets.

The “Stay Static” Checklist

Before moving a concept into the video phase, marketers should ask:

- Does the motion add actual narrative value or just “visual noise”?

- Is the product geometry too complex for the current state of I2V?

- Would a high-fidelity static image with a simple Ken Burns effect convert better than a distorted video?

In many cases, a stunningly sharp, K-level image from Nano Banana Pro is more effective than a medium-quality video. The goal of using AI in marketing isn’t to make “cool videos”—it is to generate attention and conversion. If the motion compromises the perceived quality of the brand, it is a net negative.

Ultimately, the success of your AI creative pipeline depends on your ability to control the input. By mastering the composition, resolution, and technical fidelity of the first frame, you turn AI video from a game of chance into a repeatable, scalable engine for performance marketing. High-quality motion is a byproduct of high-quality source material; there are no shortcuts to that fundamental truth.