For the professional designer or video editor, the initial thrill of generative AI usually lasts about five minutes. It ends the moment a client asks to move a window three inches to the left or replace a specific model’s jacket Iterative Regional Editing without altering their facial expression. In the traditional “prompt-and-pray” workflow, these requests are a death sentence. You re-roll the prompt, and the entire composition shifts. The lighting changes, the perspective tilts, and the “magic” of the first generation becomes a liability.

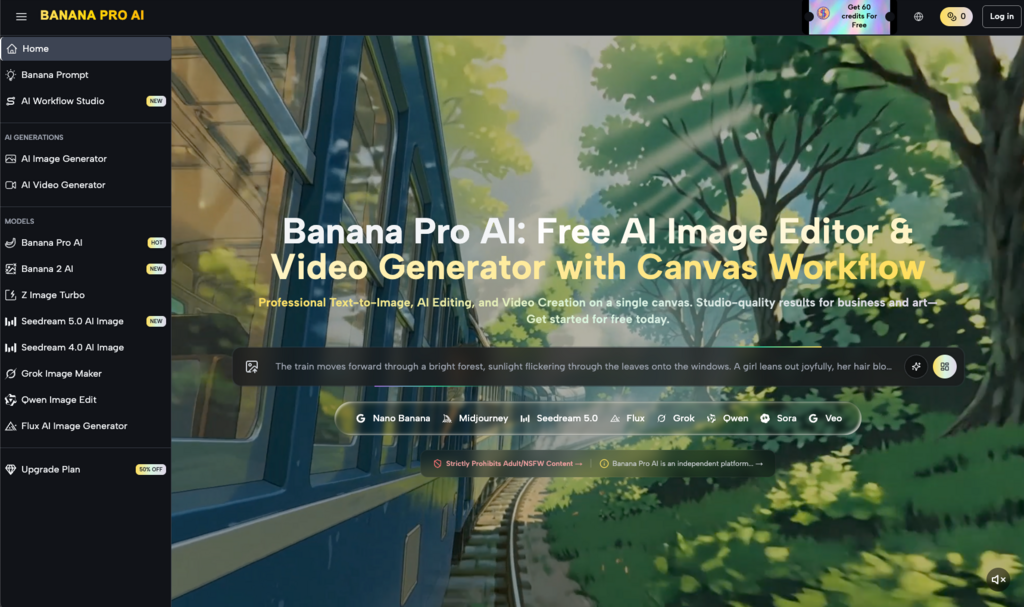

The real gap in production isn’t the ability to generate a beautiful image; it is the ability to control it. Professional workflows require a shift from holistic generation to iterative regional editing. This is where tools like Nano Banana Pro are beginning to differentiate themselves from the sea of generic wrappers. By focusing on the canvas rather than just the chat box, editors can treat AI-generated assets as living files rather than static exports.

The Myth of the Perfect Prompt

There is a persistent narrative that “prompt engineering” is the primary skill of the future. In a high-stakes production environment, this is rarely true. A prompt is a blunt instrument. It describes a general intent but lacks the spatial precision required for architectural visualization, product photography, or character-consistent video Iterative Regional Editing.

When working with Nano Banana, the objective isn’t to get the image right in one shot. It is to get the composition “mostly right” and then use regional changes to refine the details. This iterative approach mirrors the traditional design process: sketch, block out, refine, and polish. Relying on a single prompt to handle every variable—lighting, lens choice, subject placement, and texture—is an inefficient use of compute and time.

The first moment of limitation for most users comes when they realize that no matter how descriptive the prompt, the AI has no inherent understanding of “brand safety” or “physics.” If a generated hand has six fingers, a better prompt won’t necessarily fix it without changing the rest of the person’s pose. This is the first reset of expectations: AI is an assistant that requires manual correction, not a self-governing director.

Regional Changes as a Production Standard

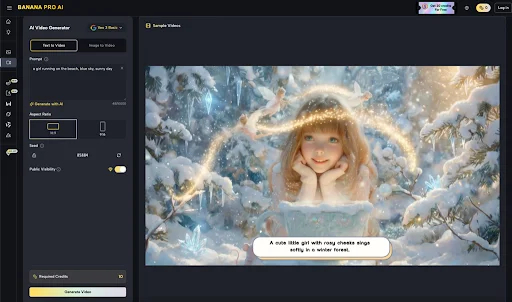

Regional editing, often referred to as inpainting, allows an editor to mask a specific area and re-generate only those pixels. In the context of Banana AI, this isn’t just about fixing errors; it’s about creative pivot points Iterative Regional Editing.

Consider a storyboard for a commercial. You have a perfect shot of a kitchen, but the refrigerator is the wrong color, or there is a stray reflection on the countertop. Instead of discarding the asset, an AI Image Editor allows you to isolate the fridge and swap its material properties while keeping the ambient lighting of the room identical. This level of precision is what makes the technology viable for commercial use.

The Role of Nano Banana Pro in High-Resolution Output

The jump from a 512×512 preview to a production-ready 4K asset is where many models fail. They lose detail or introduce strange “hallucinations” when upscaled. The Nano Banana Pro architecture is designed to handle these higher resolutions by maintaining semantic consistency across larger pixel grids.

When you use Banana Pro for regional edits, the model looks at the surrounding context of the unmasked area. This ensures that if you are adding a window to a brick wall, the bricks surrounding the window remain untouched, and the shadows cast by the new window frame match the existing light source in the scene. Without this context-aware rendering, inpainting looks like a poorly executed collage Iterative Regional Editing.

Inpainting and the Video Production Pipeline

For video editors, the stakes are even higher. Consistency is the enemy of generative video. If you change a character’s shirt in frame one, that shirt needs to exist in the same way in frame sixty.

Integrating Nano Banana into a video workflow usually involves a “keyframe-first” strategy. You generate your primary image, use the AI Image Editor features to perfect every detail of that frame, and then use that perfected image as the seed for the video generation.

This process isn’t without its hurdles. We must acknowledge a second significant limitation: temporal consistency in regional video edits is still an emerging science. If you inpaint a hat onto a walking person, the AI may struggle to maintain the hat’s perspective as the head tilts. It requires an operator’s eye to know when the AI has reached its limit and when manual tracking or traditional compositing in a tool like After Effects must take over.

Workflow: From Concept to Polished Asset

A typical professional workflow using Banana Pro often follows this specific sequence:

1. The Base Generation: Use a broad prompt to establish the “vibe,” lighting, and general composition. At this stage, you are looking for the right “bones,” not a finished product.

2. Structural Masking: Use the canvas tools to move or remove large elements. If a tree is blocking the subject, mask it out and let the background fill in naturally Iterative Regional Editing.

3. Detail Refinement with Nano Banana: This is where you address the “last 10 percent.” Fix the eyes, refine the textures of a garment, or adjust the specific brand colors of a product.

4. Upscaling and Final Grading: Once the regional edits are locked, the image is upscaled. The final pass usually happens in a traditional photo editor to ensure color accuracy across different display profiles.

By breaking the process down, the editor maintains creative agency. You aren’t just choosing from a list of options provided by the machine; you are directing the machine to execute specific tasks within a defined space.

Why Specific Models Matter

Not all models are created equal when it comes to regional control. Some models are “over-trained” on specific aesthetics, making them stubborn when you try to change a detail. If a model is trained exclusively on cinematic portraits, it might struggle to inpaint a technical diagram or a minimalist product shot.

The flexibility of Nano Banana lies in its balance. It is trained to be responsive to the mask. In many standard tools, the “noise strength” of the inpainting is hard to calibrate—either the change is too subtle to notice, or it’s so drastic that it ignores the surrounding image. Finding that “Goldilocks zone” where the new pixels blend seamlessly with the old is the hallmark of a production-ready tool.

Managing Client Expectations in the AI Era

As these tools become more prevalent, the bottleneck is no longer the software, but the communication between the creator and the client. Clients who see AI as a “magic button” may expect instantaneous revisions. However, high-quality regional editing still takes time. It requires selecting the right masks, adjusting the prompt for the specific region, and often running multiple iterations to get the lighting exactly right.

It is important to be transparent about what the Banana AI can do versus what requires manual labor. AI is excellent at texture, lighting, and general form. It is often less reliable with specific text, complex mechanical parts, or exact human anatomy in distorted perspectives. Being an “operator-led” creator means knowing when to stop prompting and start painting.

The Strategic Advantage of Localized Editing

From a business perspective, the ability to perform regional changes saves thousands of dollars in re-shoots or hours of manual retouching. If a model’s expression is too somber, inpainting a slight smile is a five-minute task rather than a scrapped production day.

For agencies, this means the “cost of change” has plummeted. In the past, major revisions late in the game were budget-killers. With the Nano Banana Pro workflow, those revisions become part of the standard polish phase. This allows for more experimentation and, ultimately, a better final product.

Looking Ahead: The Future of the Canvas

We are moving toward a future where the distinction between “creating” and “editing” disappears. In a few years, we likely won’t talk about “inpainting” as a separate feature; it will simply be how we interact with all digital media. You will point at an object and describe a change, and the underlying model will understand the spatial and temporal consequences of that request.

Until then, the competitive advantage belongs to the editors who master the “last 10 percent.” The people who can take a raw AI generation and whip it into a professional, brand-compliant, and technically sound asset. Tools like Banana Pro provide the infrastructure, but the judgment, the eye for detail, and the understanding of composition remain firmly in the hands of the human operator.

Generative AI is a powerful engine, but without the steering wheel of regional editing, it’s just a car idling in the driveway Iterative Regional Editing. By focusing on iterative control rather than one-shot prompts, creators can finally move past the novelty of AI and start using it as a serious tool for serious work.

Ready for more? Continue your journey through our latest work at Management Works Media.